AN Level 4 is Like Putting a Man on the Moon. It’s not just a Target. It’s a Strategy

In the the last month or so 8 different carriers, from a variety of places around the planet, have reached out to us to discuss

This article provides a tutorial for building Telco Cloud / Data Centre components into the inventory module of our Personal OSS Sandpit Project.

This prototype has been a bit of a beast to build and includes components such as:

This Telco Cloud prototype can be summarised as follows (noting that this is an invented network section):

You’ll notice:

What you don’t see in this diagram are the submarine landing stations and submarine + terrestrial leased lines that are also modelled, but we’ll get to that later too.

In addition, the diagram below represents rack layouts at ML1 (Melbourne) DC.

Each of the other core sites is the same, except the ONTAP-AI (rack 05), which is only based in Melbourne DC.

Device Instances

First we start by building the locations and hierarchy of devices within them in Kuwaiba. The diagram below shows the new devices we’ve built to support this Telco Cloud model:

The tree is only partially expanded and only shows ML1. You’ll notice how these assets align with the conceptual rack layout view above.

This translates to the following FlexPod Chassis Rack View in Kuwaiba, where you’ll notice I’ve created Equipment Layout artwork for UCS, switches and storage:

While fiddling with face layouts, I accidentally stumbled on a cool little feature in Kuwaiba.

If you populate the “Model” field, you get the physical / connectivity mapping (of the UCS / Compute shelf in this case):

But if you leave the model field blank then you get the Logical / Virtualisation / Application view:

If you look closely at this view above (double-click if needed), then you’ll see the various hosted customer services (IaaS, SaaS, PaaS) are stored.

The same can be seen on the AI as a Service (AIaaS). First, we see the physical layout of the NVIDIA DGX-1:

And then we can toggle to see the hosted customer AI services running on the DGX-1:

CoLocation (CoLo) Services

Speaking of customer services, we can also model CoLo services by allocating rackspace in the COLO rack, as follows:

Perhaps more importantly, we’ve also modelled the attributes of those services, including factors such as Power Feed, Space Type, Number of RUs, Access Type, Bandwidth, etc:

Connectivity

Next, we have to model the connectivity.

Inter-Rack Connectivity

Firstly, we’ll start with the patching within the racks where we follow these wiring design guides from Cisco (FlexPod), which aligns with the FlexPod Chassis Rack View diagram above…

…and NVIDIA (ONTAP-AI) respectively

Inter-DC Connectivity

Then we establish the Inter-DC connectivity, firstly starting with the Submarine and major terrestrial links between cities (double-click for a closer look):

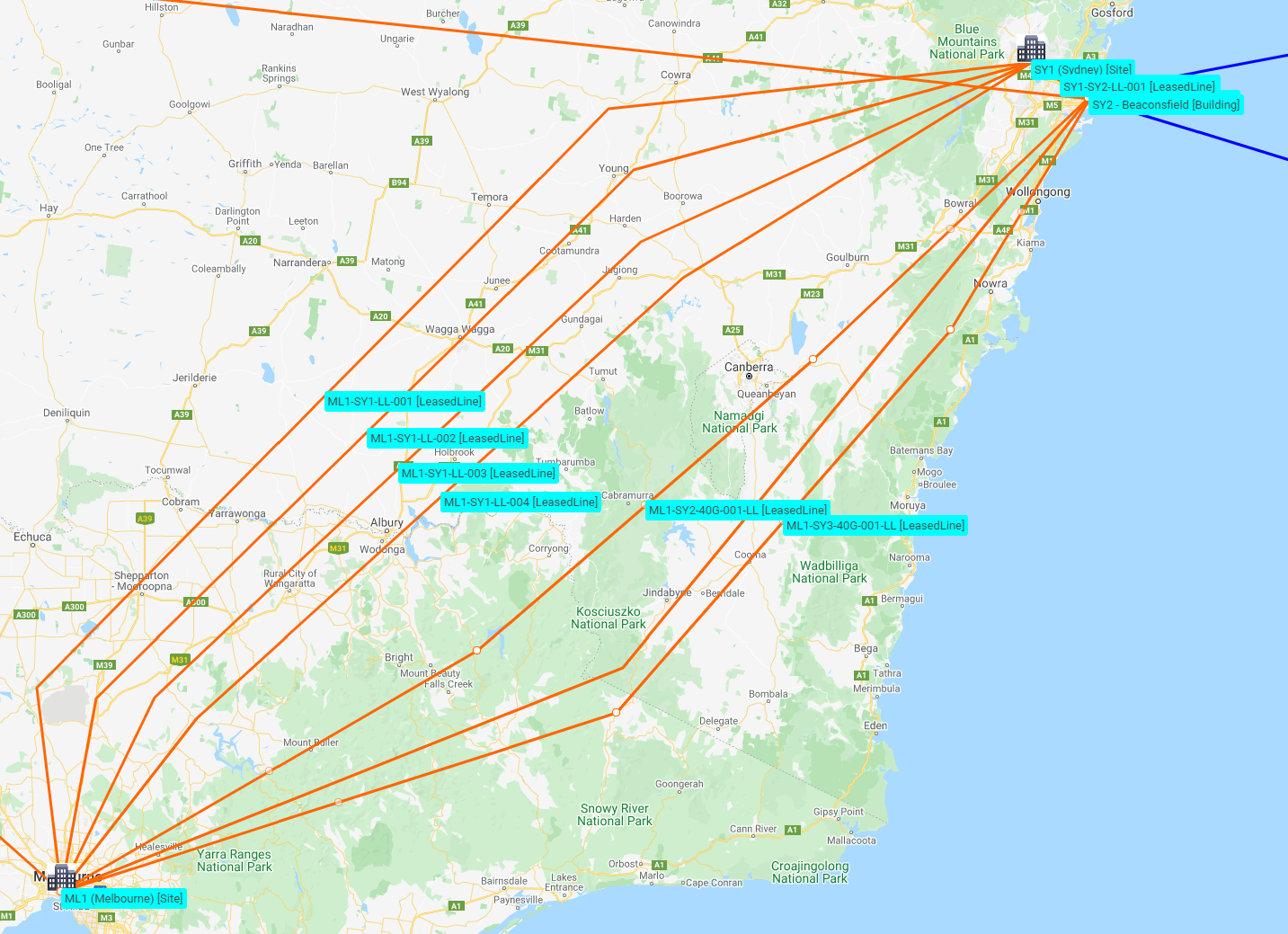

Being an Australia-based Telco Cloud provider means there are extensive Leased Line links between Melbourne and Sydney:

And also terrestrial leased-lines across to the submarine landing station in Perth to support the links to Singapore:

Submarine Landing Station to DC Connectivity

However, we also have local leased lines that allow us to link the DCs to the Submarine landing stations:

From diverse landing stations at Beaconsfield (SY2) and Paddington (SY3) to the Sydney DC (SY1):

From diverse landing stations at Manasquan (US3) and Brookhaven (US4) to the New York DC (NY1). Note that I haven’t shown the US West-Coast landing stations at Morro Bay (US1) and Hillsboro (US2) but you can see the orange terrestrial links going off to them from US3 and US4 below.

I’ve only shown a single landing station in Singapore, that lands the SeaMeWe-3 and Australia-Singapore submarine cables. However, I’ve then shown diverse routes to the Singapore DC (SG1)

End-to-End DC Connectivity

All of the leased lines are now in place, but we now need to establish end-to-end routes through all these leases… connecting the dots as it were. Here are:

Diverse Routes showing all hops from ML1 to NY1….

…and Diverse Routes from ML1 to SG1

Customer Leased-line Connectivity

Then we build the leased lines to customer sites at Geelong (from ML1 DC), Queens (from NY1 DC) and Kuala Lumpur (from SG1 DC):

Internet Leased Lines

And finally the Leased Lines from the POI (Point of Interconnect) rack in the DC to local ISPs in each city. These are shown symbolically just to track their Leased Line identifiers rather than actual routes:

Mapping the MPLS Network

Now that all the physical connectivity is in place, we can record all the attributes of the MPLS network. You’ll remember that the first diagram above showed all the ports, IP addresses / subnets and BGP AS numbers.

Firstly the IP / subnet allocations

The right-hand pane shows the four major subnets (MPLS core, customer leases, Loopbacks and Customer Lease ranges respectively).

Just as a single example, the left-hand pane shows that 10.1.1.1 has been assigned to port Gi0/0/0/0 on the PE router at ML1 from the 10.1.1.0/28 subnet.

The diagram below then shows all of the MPLS Links in Kuwaiba (but note that I’ve manually overlaid the blue cloud to make it easier to see the core DC Interconnect network (with P routers in the POI rack in the DCs on its perimeter)). PE routers (in the PE rack in the DCs) are shown connecting to the P routers, but also connecting to the Customer Edge (CE) routers at customer sites.

Then finally we show the MPLS attribute mappings.

The upper pane shows pools of VRFs (VPN00001 for the demonstrated customer’s network and another for the backbone network), as well as AS allocations.

The lower pane shows an example VRF and how it’s associated with:

Customer Service Mappings

The following shows Service Mappings for Customer 0001. They’ve racked up a lot of services here! This service inventory can be used to assist with billing the customer each month.

Summary

I hope you enjoyed this introduction into how we’ve modelled a sample Carrier Cloud / DC into the Inventory module of our Personal OSS Sandpit Project. Click on the link to step back to the parent page and see what other modules and/or use-cases are available for review.

More to come on SDN and SD-WAN in future articles.

If you think there are better ways of modelling this network, if I’ve missed some of the nuances or practicalities, I’d love to hear your feedback. Leave us a note in the contact form below.

In the the last month or so 8 different carriers, from a variety of places around the planet, have reached out to us to discuss

Telco keeps worrying about a skills shortage. But a group of Solent University students just reminded me that the talent is still there. We just

Most OSS experts focus on the implementation and post-go-live performance aspects of a transformation. That makes perfect sense, because they’re the most visible phases. But

Everyone wants to know who the best OSS vendor is. But since there are 500+ OSS/BSS vendors, that question usually starts an argument, rather than