In the the last month or so 8 different carriers, from a variety of places around the planet, have reached out to us to discuss their ambitions for reaching AN Level 4. There’s clearly a major groundswell behind it. The thing about AN Level 4 is it sounds like a clear destination. But it doesn’t […]

Telco keeps worrying about a skills shortage. But a group of Solent University students just reminded me that the talent is still there. We just need to give them problems worth solving. That’s why I wanted to take a moment to congratulate the Solent University team that recently developed an automated network prediction pipeline. Their […]

Most OSS experts focus on the implementation and post-go-live performance aspects of a transformation. That makes perfect sense, because they’re the most visible phases. But by the time implementation begins, many of the decisions that shape success or failure have already been set in place. The pre-implementation phase is often underestimated – treated as a […]

Everyone wants to know who the best OSS vendor is. But since there are 500+ OSS/BSS vendors, that question usually starts an argument, rather than the transformation you want to kick-start. The better question is which vendor best fits you best – your architecture, budget, maturity, constraints and operating model. PAOSS’s Inverted Pyramid approach starts […]

Telco keeps talking about being scared of an imminent skills cliff. But perhaps the real problem is not just that experienced people are leaving. It’s that the industry has stopped feeling like the natural destination for the next generation of brilliant minds. Meanwhile, the industry’s hardest problems – automation, AI-native operations, complex transformation, data integrity, […]

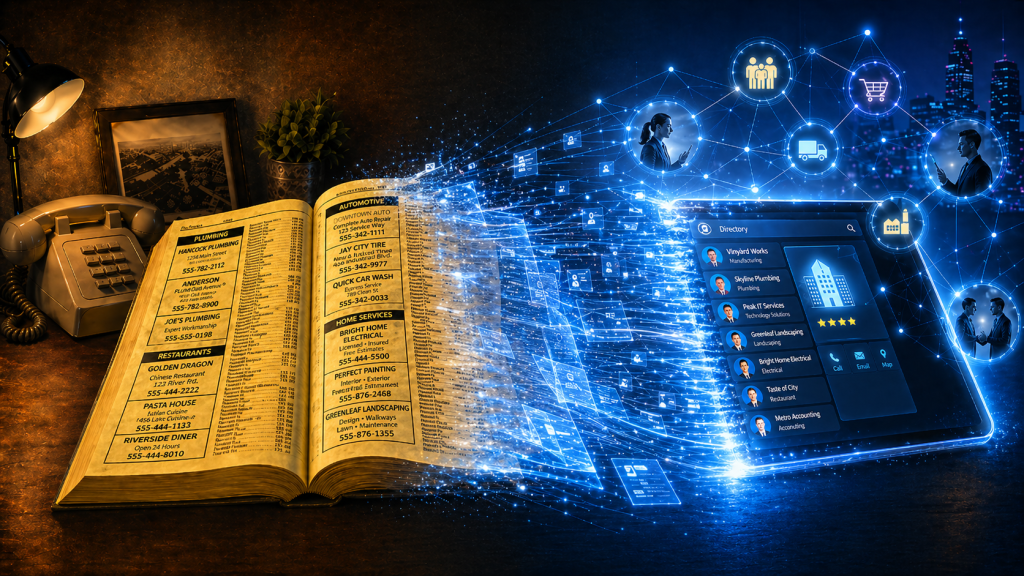

Phone books now look like a relic from another era. Stone. Cold. Dead. But they were once one of the most powerful business growth engines in the world. Owned by telcos! As they declined in importance, Telcos didn’t just lose a directory. They totally forgot they were matchmakers. Phone books were never just paper directories. […]

The old proverb above really resonates. I love this industry. You could even say I’m Passionate About OSS. And like many of us, I’ve been a beneficiary of trees that other people planted – frameworks, knowledge, standards, examples, terminology, APIs, architectures and shared wisdom. But it feels like we’re now on the cusp of generational […]

Every carrier knows it needs a clear product catalogue, with well-defined service and resource definitions. But it is not always obvious where to start. Across the world, most carriers sell broadly similar families of products, whether broadband, mobile, voice, VPN or bundles. At the same time, every operator needs to differentiate, which means no two […]

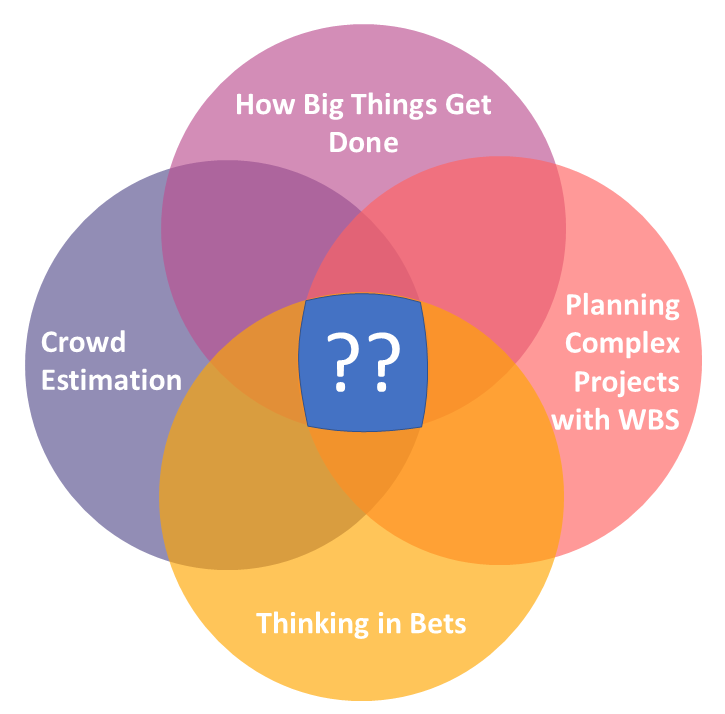

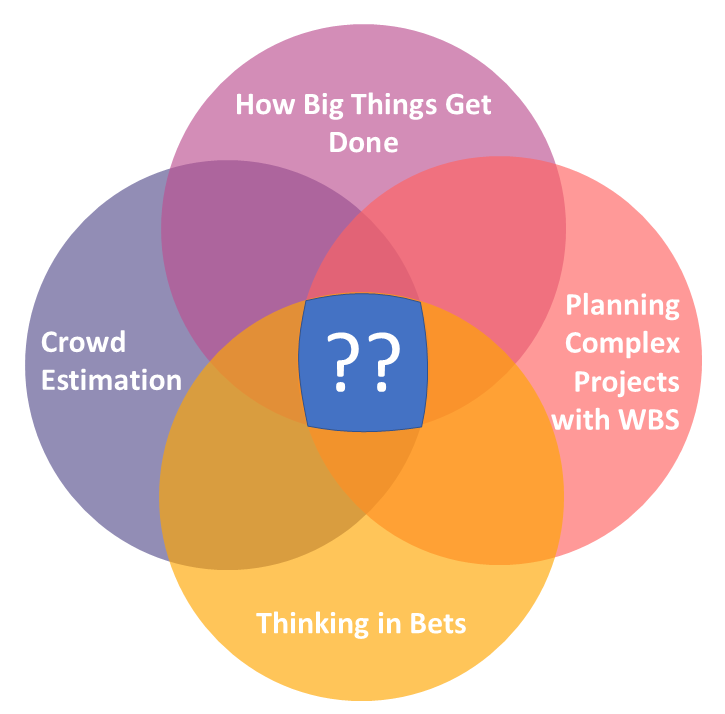

After reading the book, How Big Things Get Done (by Bent Flyvbjerg and Dan Gardner) about why megaprojects fail, I found it fascinating that it overlapped with another recent article. Flyvbjerg and Gardner’s core argument is that successful complex projects are not won by speed of commitment, but by better odds and better sequencing, which […]

I have a really important question for you to ask yourself today. It’s a question that shapes a lot of our thinking about how I can help the OSS/BSS/telco industry. How does what I do make more money for clients? Not just what your company does. Not what your brand says on the website. Not […]

I would have heard the phrase “your network is your net worth” early in my career and probably nodded along without really believing it (or even comprehending it if I’m being honest). It sounded like one of those catchy lines that people repeat because it sounds wise. At the time, I was much more focused […]

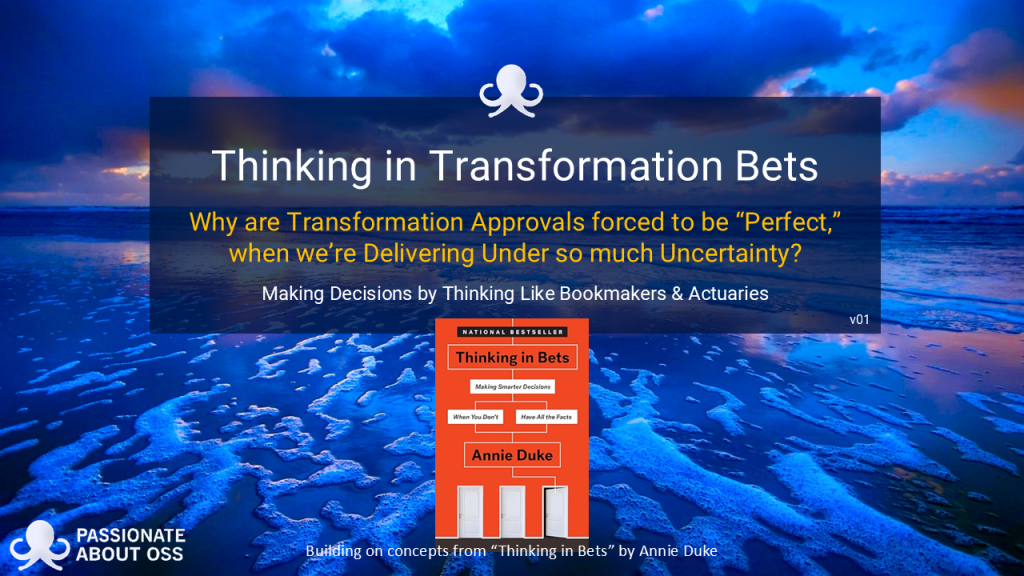

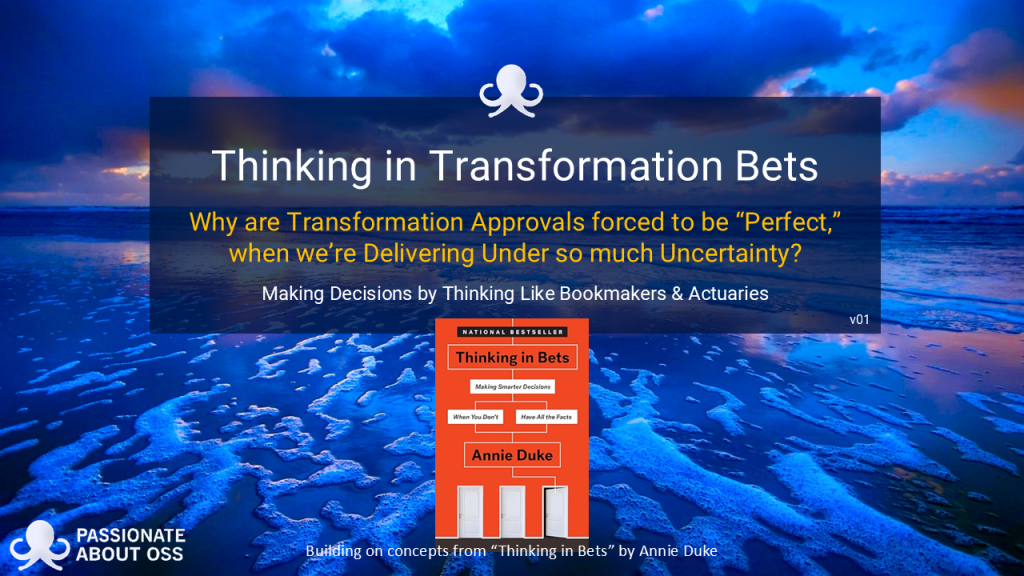

We’re releasing our latest report today. Click on the image below to download it. Why are transformation approvals (eg business case approvals, vendor selections, project transformation decisions) forced to look perfect when delivery is anything but? That is the quiet contradiction at the heart of many (most?) digital transformation programmes. We build business cases as […]

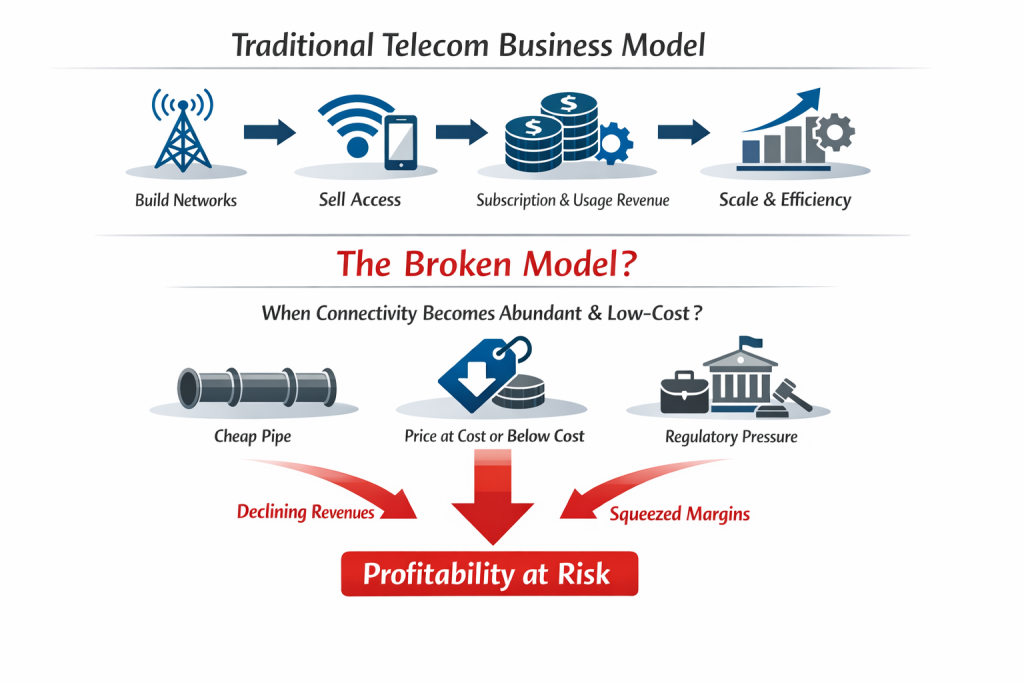

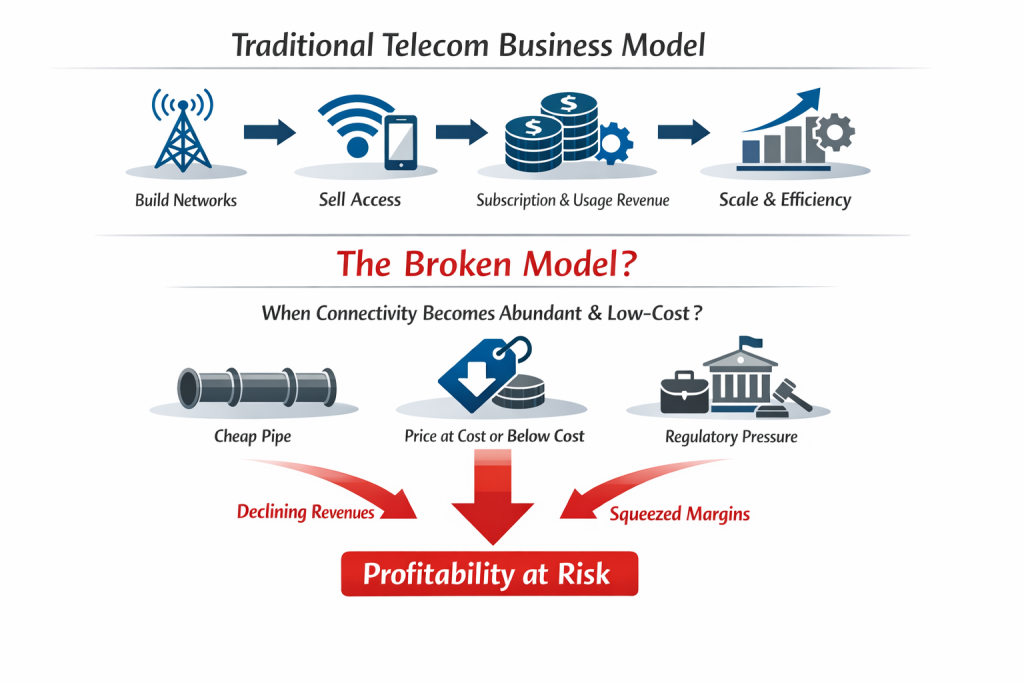

As seen in the diagram, for most of telecom history, the core business model has been simple: Build networks Sell access to those networks Recover capital (revenue) through subscription and usage revenues, and Protect margin through scale and operational efficiency But what happens if (when? after?) that model breaks? What happens if connectivity becomes so […]

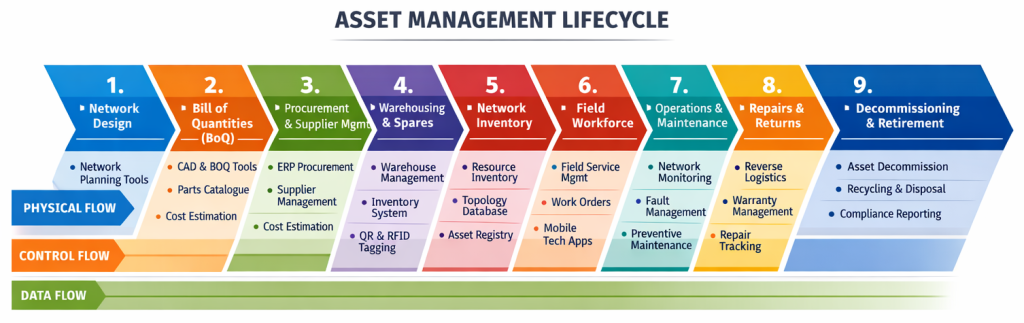

If you’re leading an OSS/BSS transformation, one of the hardest parts is not knowing what needs to happen first (or second, third, etc). Not in theory. In practice. Most large transformation programmes don’t fail because of a lack of ambition. They fail because the early sequencing is off or steps are overlooked. Vendor conversations begin […]

With MWC upon us again, I thought I’d pose a question about how our industry, and the 500+ OSS/BSS vendor market within it, is currently evolving. Telcos spend billions on transformation programmes every year. They talk about massive disruption like cloud-native stacks, open architectures, AI-driven automation and next-generation digital experiences. On paper, it sounds like […]

Quants have become the rockstars of modern share trading – extracting powerful signals from oceans of data at near real-time speed. Trading firms invest billions in them and in infrastructure that will give them even the slightest timing edge. Yet while telcos drown in dashboards, the next competitive advantage may belong to the “NOC-star” – […]

One of the things I find incredibly interesting when I look at the Simplified TAM diagram below is that of each of the arrows indicating a workflow, only one has systems that aren’t really designed to manage the operational workflow. Assurance has trouble tickets Fulfilment has service orders Field operations has work orders Even billing […]

The OSS/BSS vendor landscape just crossed another threshold. The Passionate About OSS Blue Book OSS/BSS Vendor Directory has now grown to over 750 listings, giving buyers and sellers one of the most comprehensive views of the telecom software ecosystem available today. But this milestone alone isn’t the only part today’s story. We’ve just introduced a […]

Two goldfish are dropped into a new tank. One turns to the other and asks, “Do you know how to fire the cannon on this thing?” That single gag captures the moment when what you expected collapses and the script is flipped. It has similarities with what users experience when a software transformation is forced […]

What happens when a Software Engineer, Enterprise Architect and Network Ops Engineer walk into a bar?….. . You know that head-slap moment when you realise software is more hindrance than help? I had one such experience back in circa 2005, when I watched a genuinely brilliant network ops engineer spend an entire afternoon navigating tools […]