After reading the book, How Big Things Get Done (by Bent Flyvbjerg and Dan Gardner) about why megaprojects fail, I found it fascinating that it overlapped with another recent article.

Flyvbjerg and Gardner’s core argument is that successful complex projects are not won by speed of commitment, but by better odds and better sequencing, which interestingly, aligns closely with our recent article about Thinking in Bets (a book by Annie Duke).

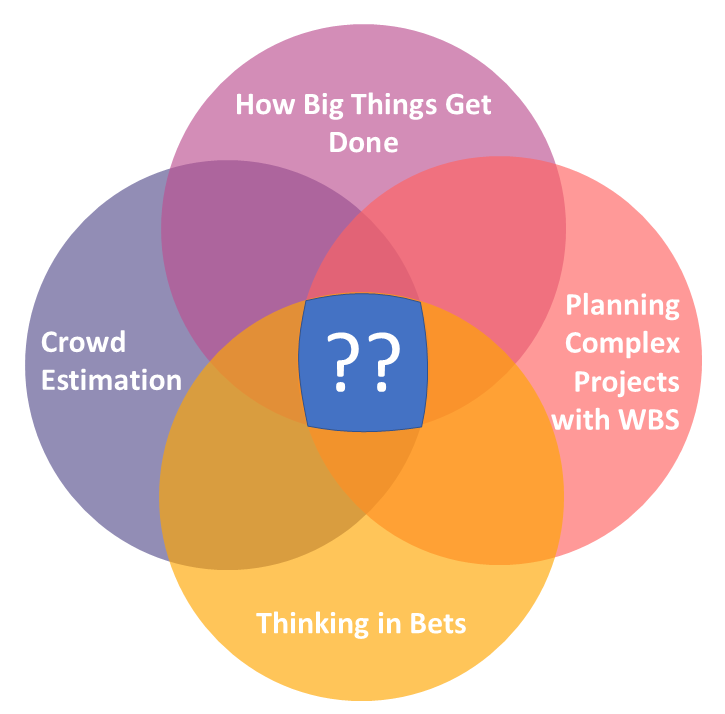

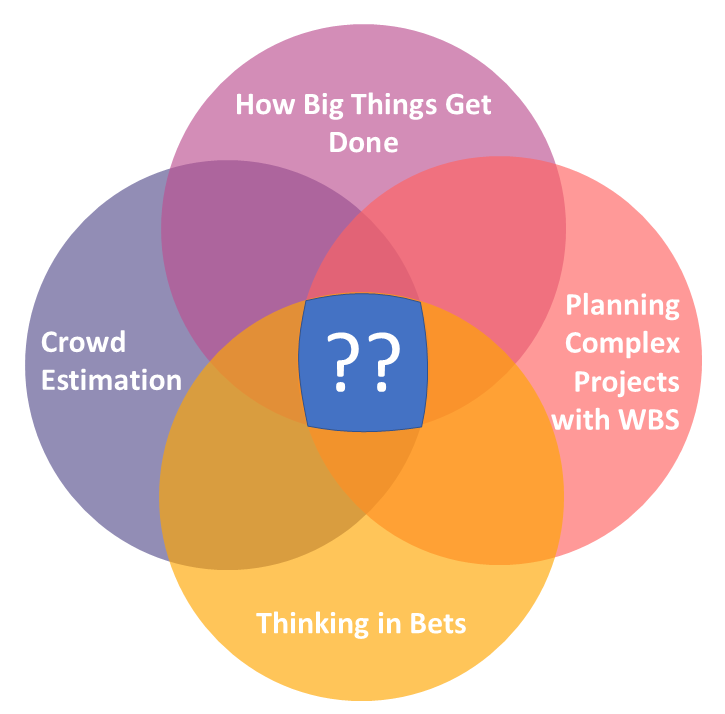

These two books also overlap with a couple of other ideas we talk about regularly, so I thought I’d share a thought experiment with you today.

That centre point is where the thought experiment begins.

But first, let’s align some of the principles.

Flyvbjerg and Gardner’s central insight is brutal in its simplicity. Big projects fail because humans are wired to underestimate risk, overestimate control and commit too early to numbers, designs and narratives that feel right. Across a database of more than 16,000 projects, only 8.5% hit both cost and time targets and just 0.5% hit cost, time and benefits together. They call this the Iron Law of megaprojects: over budget, over time, under benefits, over and over again. They are also explicit that this is a probabilistic law, not a deterministic one.

That’s why it makes perfect sense to cross-reference it with Thinking in Bets. How Big Things Get Done gives the empirical warning. Thinking in Bets gives the operating model. One tells us why certainty is dangerous. The other tells us how to make decisions around uncertainty anyway.

It changes how we present the programme.

Instead of asking, “Will this programme deliver by Q4 next year?”, we ask, “What are the odds, based on comparable programmes, that this workstream lands by Q4 next year within acceptable quality and budget bounds?”

Instead of saying, “The integration will succeed”, we say, “We currently assign a 55% probability of clean integration in Wave 1, rising to 75% if the POC achieves sign-off of priority capabilities”.

Most transformations business cases begin with a grand question disguised as a plan: will this programme deliver the target state by the target date for the target budget?

That question is almost useless.

It’s too broad, too political and too late-binding. By the time the truth becomes visible, too much money and reputation have already been committed. Flyvbjerg describes this kind of premature lock-in as the commitment fallacy, the rush to commit before goals, alternatives, risks and delivery conditions have been explored properly.

A better opening question is: what would we need to believe, and what would we need to prove, for this transformation to deserve the next commitment?

.

A second perspective from How Big Things Get Done is to recognise that the risk profile of complex transformations (like OSS/BSS) is not merely uncertain, but often fat-tailed. Flyvbjerg shows that many project categories are exposed to extreme downside events, not tidy bell-curve variation. His research found that large IT projects can be particularly vicious, with 18% suffering cost overruns above 50% and those tail projects averaging 447% overrun. In other words, the danger is not just being a bit late or a bit over budget. The danger is being catastrophically wrong in ways that invalidate the whole investment case.

If the tail risk is real, then business cases with a specific ROI number are not enough. A better approach is to treat the programme as a portfolio of linked bets. Some are reversible and cheap, like proving an API mediation pattern or piloting a reconciliation workflow. Some are semi-reversible, like rolling out a new service model to one product family. Some are highly irreversible, like replacing the core charging stack or cutting over the master inventory source. The more irreversible the bet, the more outside-view evidence, simulation and contingency you should demand before committing.

.

A third concept is What’s your Lego? which means modularity, repetition and standardisation are not administrative niceties but probability-improving mechanisms. Reusable patterns, repeatable migration waves and standard integration designs reduce variance and improve learning effects. They make the programme more forecastable, which is precisely what you want in a bet-driven model. This is the exact intent of our work on TM Forum’s GB1011 (Transformation Project Framework), designing Transformation Plans using WBS. Whilst every OSS/BSS project is different, there are generally repeatable patterns we can re-use.

.

A fourth perspective is that one of the greatest risks in any project is not external surprise but ourselves: our biases, our lock-in, our willingness to spin numbers and our tendency to confuse commitment with competence. In OSS/BSS, that shows up everywhere. One of Flyvbjerg’s strongest lessons is that the outside view is essential because the inside view almost always underestimates complications.

Sponsors anchor to dates before discovery is complete. Vendors overstate out-of-the-box fit. Delivery teams underplay data defects because they want mobilisation to continue. Steering committees interpret confidence language as weakness, so people give certainty theatre instead of honest probabilities. That is exactly why Thinking in Bets belongs here. It provides a language for uncertainty that is disciplined rather than defeatist.

Our estimates are more like guesstimates. Thinking in Bets gives us a framework, but the use of the knowledge of the crowd should, theoretically at least, make estimates more accurate (with an important asterisk: the crowd often outperforms individual experts or conventional expert panels in uncertain, probabilistic domains, but only when the crowd is designed properly.)

Expert intuition is strongest when the environment is sufficiently repeatable and the expert has had opportunities to learn from past experiences. I see this as having a panel of experts reviewing the probability of individual activities (within their field of expertise) within a WBS rather than predicting across the project as a whole (which is always bespoke and not sufficiently bounded, unlike certain tasks within the project).

In this case we use the crowd as a forecasting and calibration engine, not as a substitute for transformation planning / estimation or executive accountability.

.

A major transformation should not be a promise to be defended. It has the potential to be a portfolio of bets to be priced, sequenced and repriced as evidence accumulates.

That does not make leadership weaker. It makes it more honest, more adaptive and, in all likelihood, more successful.

Our flower-petal Venn Diagram suggests:

- How Big Things Get Done – provides the warning that major projects are routinely misestimated, especially when leaders ignore outside-view evidence

- Thinking in Bets – gives the logic that every work package is a forecast rather than a certainty

- WBS – provides the decomposition of a project into smaller activities (eg mapping of responsibilities, dependencies, tasks, etc). The WBS becomes a living “map of bets” that can be continually re-estimated as new information becomes available. Any uncertainty on underlying activities is rolled-up through the WBS.

- Crowd-estimation – provides effort and likelihood probabilities (eg probability of finishing by date, probability of finishing within effort/cost band, confidence score, dependency risk, estimate distributions, etc). This could be in terms of P10 (optimistic date/effort), P50 (median) and P90 (cautious) effort ranges to provide a Gannt chart with a confidence envelope rather than a specific due date

Previously, this would all be a bit unwieldy. With statistical analysis and machine learning, this feels more achievable today.

Because in the end, the goal of transformation is not to sound certain at kickoff. It’s to improve the odds of getting the thing done.

.

So what is the actual thought experiment?

What if a major OSS transformation were planned in a WBS, but each important branch was also treated as a bet, anchored in repeatable / modular thinking and repriced by a knowledgeable crowd before each irreversible commitment?

It might create a transformation model that is less vulnerable to optimism, less dependent on seniority persuasion, more modular, more evidence-driven and more honest about where confidence is real and where it is simply being performed… and continually refined whilst the project is in-flight rather than being a one-shot estimation.

If an OSS transformation is treated as a portfolio of decomposed bets, then the programme plan should not pretend every task has a single, deterministic finish date. Traditional Gantt charts are useful, but they also create a dangerous illusion. A neat bar stretching from one date to another suggests a level of certainty that rarely exists in major transformations, especially where data migration, integration, reconciliation, testing and cutover dependencies are involved.

That matters because one of Flyvbjerg’s core warnings is that major projects do not merely suffer ordinary variation. Many are exposed to skewed and sometimes fat-tailed risks, where downside events are larger and more frequent than leaders expect. In practice, that means schedule risk is often not symmetrical. The likely problem is not that a task finishes two weeks early or two weeks late with equal probability. It is that it might finish roughly on time, slightly late or catastrophically late if hidden dependencies, quality defects or rework loops appear.

This is important because many transformation dashboards still tell leaders only what has already happened. A task is green, amber or red. A milestone is on track or off track. But by the time a milestone turns visibly red, the underlying uncertainty has often been growing for months.

What are your thoughts?